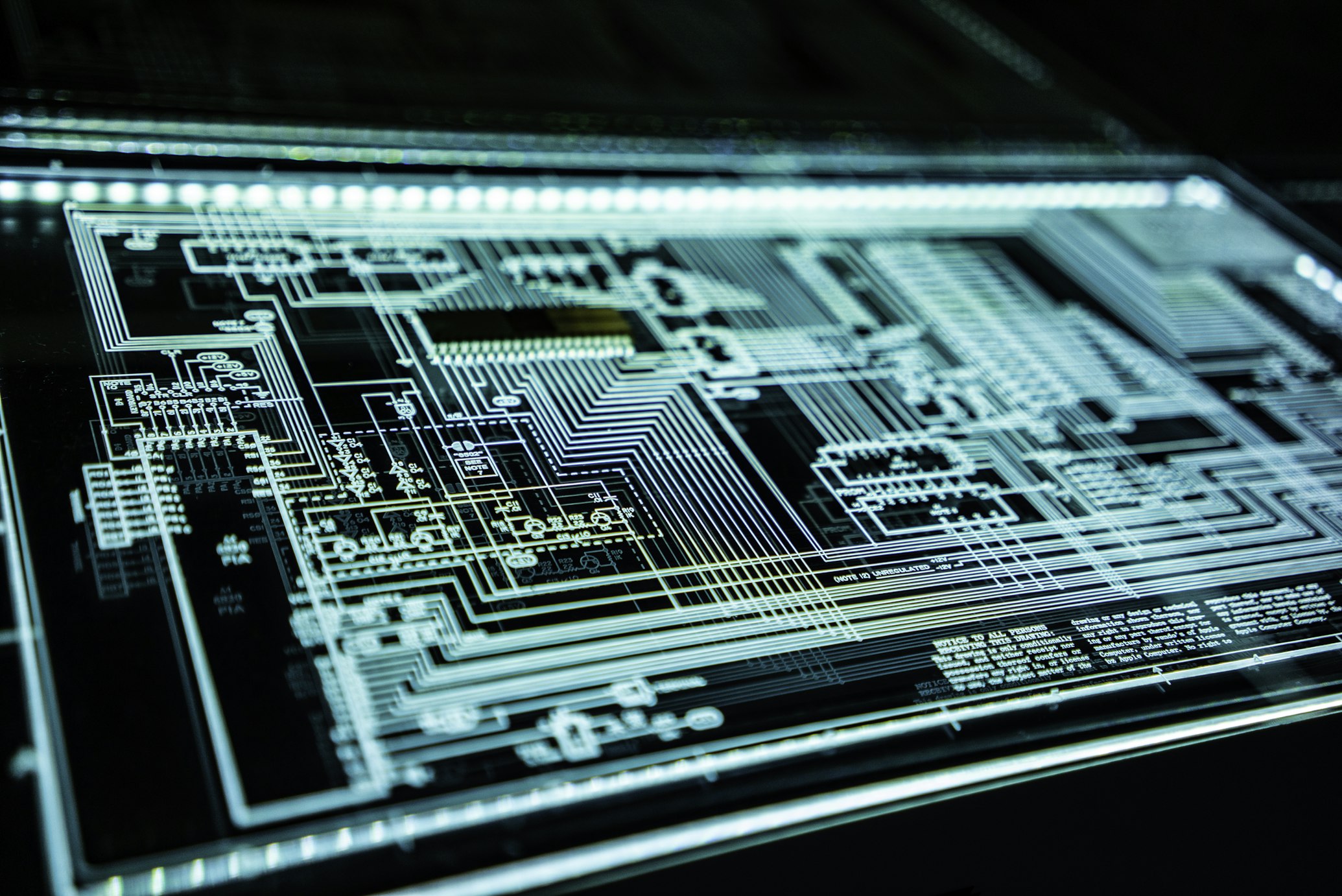

NVIDIA GTC 2026: Unveiling the Rubin Architecture and the Shift to Agent-First Infrastructure

Image source: https://www.nvidia.com/en-us/gtc/

The Pivot to Agentic Intelligence: NVIDIA GTC 2026 Keynote Analysis

On March 16, 2026, at the SAP Center in San Jose, NVIDIA CEO Jensen Huang delivered a landmark keynote at GTC 2026, officially signaling the end of the "Training Era" and the dawn of the "Agentic Era." With over 39,000 attendees from 190 countries watching, Huang introduced the Rubin architecture, a full-stack infrastructure overhaul designed specifically to solve the bottlenecks of autonomous AI agents and large-scale inference.

The core message was clear: while the previous three years were defined by scaling model parameters, the next three will be defined by scaling agentic workflows. This shift requires a fundamental change in hardware, moving away from simple GPU clusters toward integrated "AI Factories" where CPUs and GPUs share the load of complex, multi-step reasoning.

---

Technical Deep Dive: The Rubin Platform and Vera CPU

The centerpiece of the announcement is the NVIDIA Rubin platform, a six-chip architecture that integrates the next generation of GPUs with the newly unveiled Vera CPU.

#### 1. The Vera CPU (Olympus Cores) For the first time in GTC history, the CPU shared equal billing with the GPU. The Vera CPU features 88 custom "Olympus" Arm cores. According to Dion Harris, NVIDIA’s head of AI infrastructure, the industry has hit a "CPU bottleneck" where agentic tasks—which involve sequential logic, tool use, and data orchestration—are being slowed down by traditional processor architectures. The Vera CPU is designed to handle the "orchestration layer" of AI agents, managing the data movement and state-tracking required for long-running autonomous tasks.

#### 2. The Rubin GPU and HBM4 The Rubin GPU represents a massive leap in memory bandwidth, utilizing High Bandwidth Memory (HBM4). This is critical for the "Agentic Think-Act-Observe" loop. A specialized variant, the Rubin CPX, was introduced to handle the "context stage" of long-context inference using GDDR7 memory. This allows agents to maintain massive context windows (up to 1 million tokens in the companion Gemini 3.1 Pro models) without the prohibitive latency costs seen in the Blackwell generation.

#### 3. NVLink 6 and Accelerated Networking To connect these components, NVIDIA introduced the NVLink 6 Switch, which enables rack-scale systems to operate as a single unified computer. This is essential for "Physical AI" applications—robotics and factory automation—where sub-millisecond latency between sensing, reasoning, and acting is non-negotiable.

---

The Strategic Intel Partnership and AI Racks

In a move that surprised many analysts, NVIDIA detailed a deepened $5 billion partnership with Intel. Under the "NVLink Fusion" initiative, Intel’s 6th-gen Xeon CPUs (Sierra Forest and Granite Rapids) will be embedded directly into NVIDIA’s AI racks. This partnership addresses the massive demand for general-purpose compute within AI clusters. As agentic workloads grow, the need for x86-based sequential processing to coordinate between thousands of specialized agents has become a primary driver of infrastructure sales.

---

Business Implications: The Rise of the Agent Economy

For business leaders, GTC 2026 confirms that the ROI of AI is shifting from "chatbots" to "agents." Gartner’s latest forecast, cited during the event, predicts that 40% of enterprise applications will incorporate task-specific agentic AI by the end of 2026, up from less than 5% in 2025.

#### The Cost of Intelligence is Crashing A recurring theme at GTC was the dramatic reduction in the cost of inference. With the Rubin architecture, the cost of running a reasoning-heavy model like GPT-5.2 or Gemini 3.1 Pro has dropped by an estimated 60% compared to the previous year. This allows startups to build "AI-native" companies with minimal staff, leveraging agents to handle everything from software development to marketing operations.

#### Competitive Landscape: Google's Counter-Move Coinciding with the GTC keynote, Google announced Gemini 3.1 Flash-Lite, a model optimized for the exact type of high-speed, low-cost inference NVIDIA’s new hardware enables. This "Inference War" between Google and NVIDIA (and by extension, Microsoft/OpenAI) is driving a rapid commoditization of intelligence, where the value is no longer in the model itself, but in the agentic workflow and proprietary data used to fine-tune it.

---

Implementation Guidance for Technical Leaders

Transitioning from RAG (Retrieval-Augmented Generation) to full agentic workflows requires a new architectural approach.

- Adopt the "Think-Act-Observe" Loop: Move away from single-prompt interactions. Design systems where the AI can formulate a plan, execute a tool (like a CRM or database query), observe the result, and iterate.

- Focus on Agent Interoperability: As highlighted by InfoWorld, the next frontier is the "Agent Economy," where agents from different platforms (e.g., an OpenAI research agent and a Google marketing agent) must negotiate and exchange services. Use open standards like the newly launched AAMP (Agentic Advertising & Marketing Protocol).

- On-Device vs. Cloud Hybridization: With the launch of the AMD Ryzen AI 400 Series (offering up to 60 NPU TOPS), enterprises should look to offload sensitive, low-latency agent tasks to the edge while reserved heavy reasoning for Rubin-powered cloud clusters.

---

Risks and Ethical Considerations

Despite the optimism, NVIDIA and industry analysts raised several red flags:

- Silent Failure at Scale: Experts warn that the greatest risk in 2026 is not a dramatic system crash, but "silent failures" where agents make small, compounding errors across a business workflow that go undetected for weeks.

- Regulatory Pressure: The New York "Chatbot Liability Bill" is currently advancing, which could hold companies legally responsible for the advice or actions taken by their autonomous agents.

- The AI Bubble Fear: Jensen Huang explicitly addressed "bubble fears," arguing that the shift to "Physical AI" and "AI Factories" represents a tangible industrial revolution rather than a speculative software peak. However, the $1.4 trillion in data center commitments remains a high-stakes bet on continued demand.

---

Conclusion: The Year AI Became Always-On

GTC 2026 marks the moment AI transitioned from a tool we use to an environment we inhabit. The Rubin architecture provides the nervous system for an economy run by agents. For technical and business leaders, the message is clear: the infrastructure is ready. The challenge now is not building the model, but building the workflow that allows that model to work autonomously, reliably, and at scale.

Primary Source

NVIDIA, TrendForce, Tech in AsiaPublished: March 16, 2026